Models

Palo Alto, California

Zyphra is excited to release Zamba2-small, a 2.7B state-of-the-art (SOTA) small language model for on-device applications.

Paolo Glorioso, Quentin Anthony, Yury Tokpanov, James Whittington, Jonathan Pilault, Beren Millidge

Zamba2-small Highlights

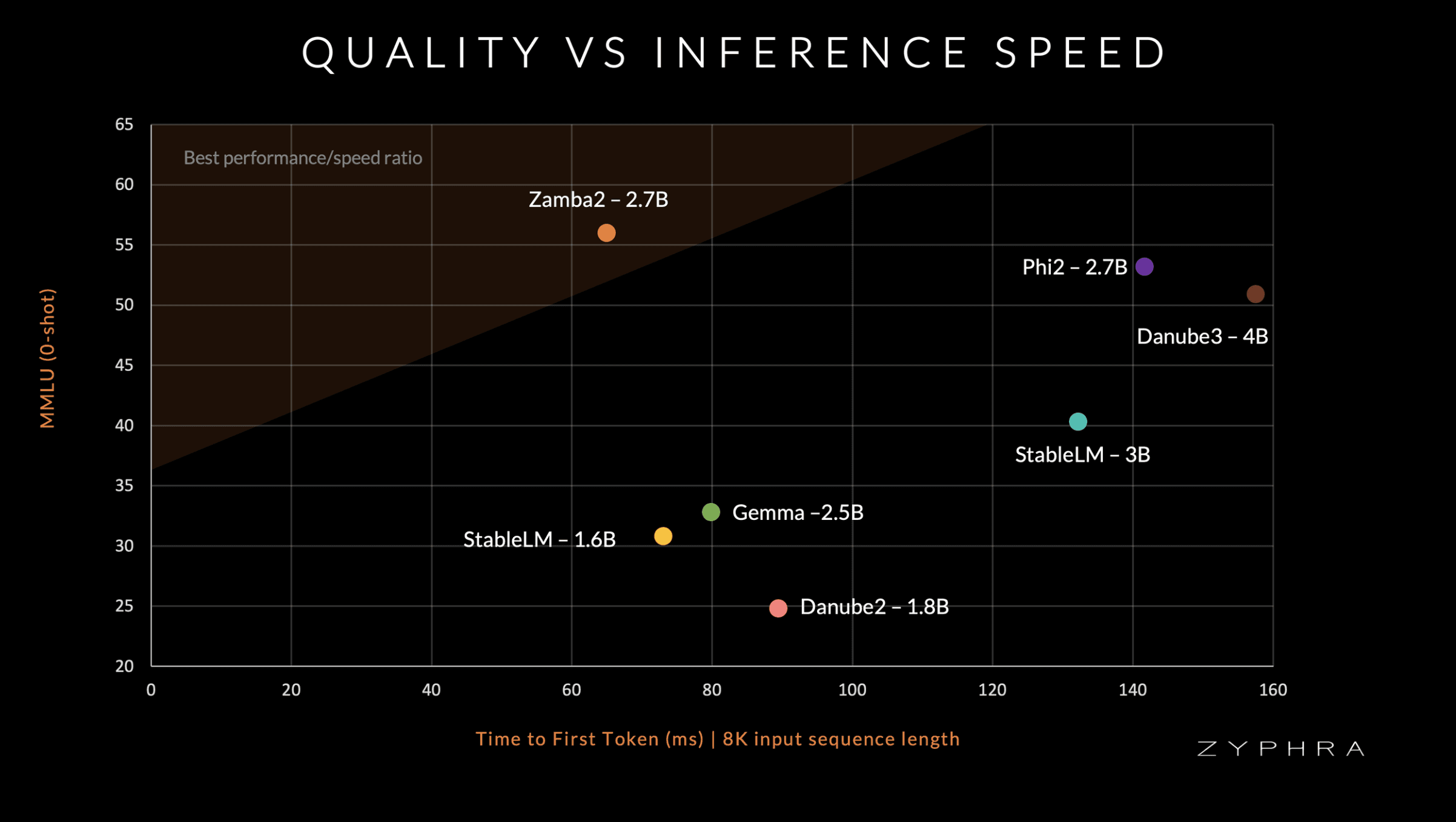

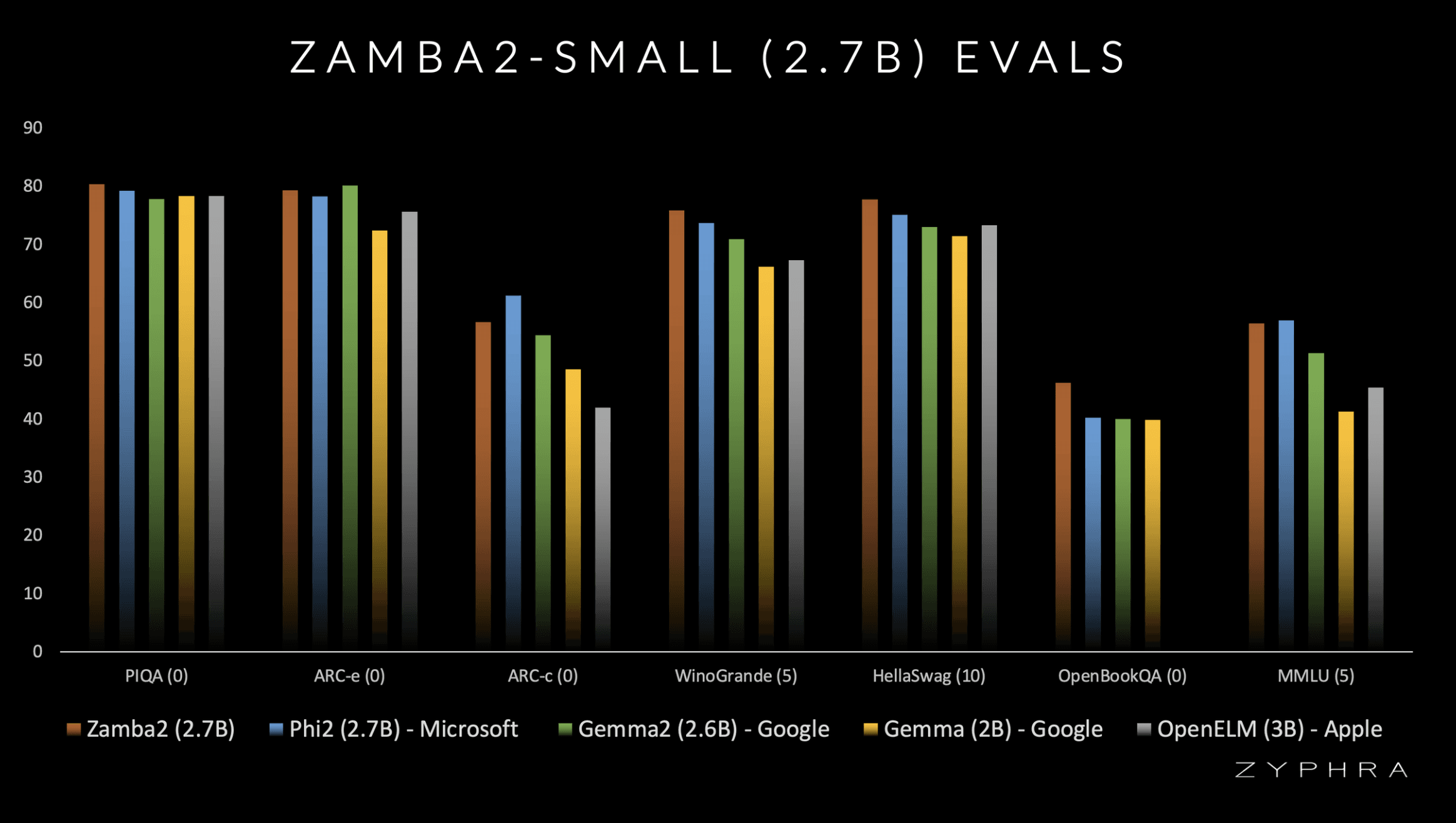

Zamba2-small (2.7B) achieves SOTA evaluation benchmark performance and superior inference efficiency compared to models of a similar scale such as Gemma2-2.7B (Google), StableLM-3B (StabilityAI), OpenELM-3B (Apple) and Phi2-2.7B (Microsoft).

Architectural improvements over Zamba-7B :

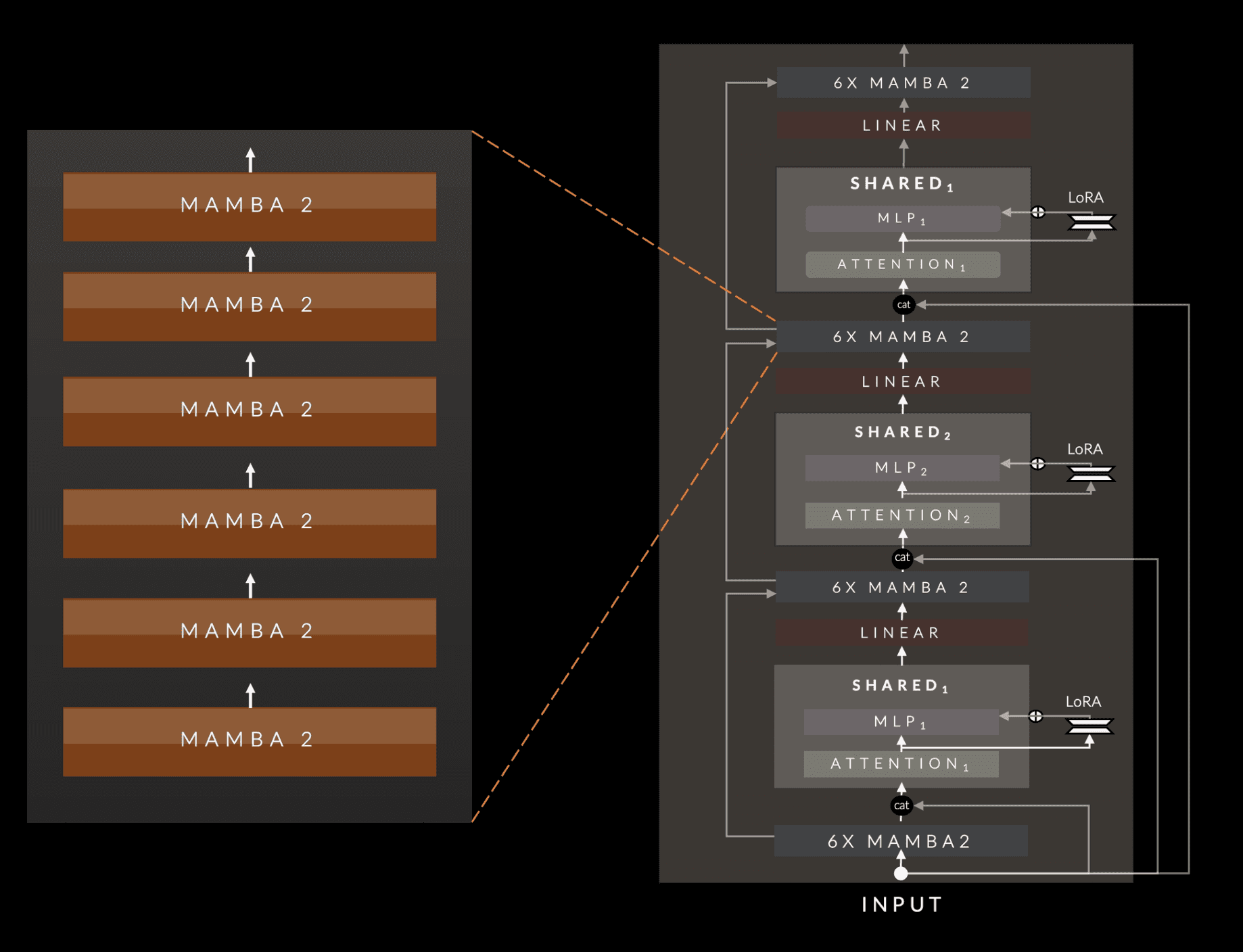

Mamba1 blocks have been replaced with Mamba2 blocks

Instead of a single shared attention block, we utilize two shared attention blocks which are interleaved in an ABAB pattern throughout the network.

We apply a LoRA projector to each shared MLP block, which allows the network to specialize the MLPs at each invocation of the shared layer across depth

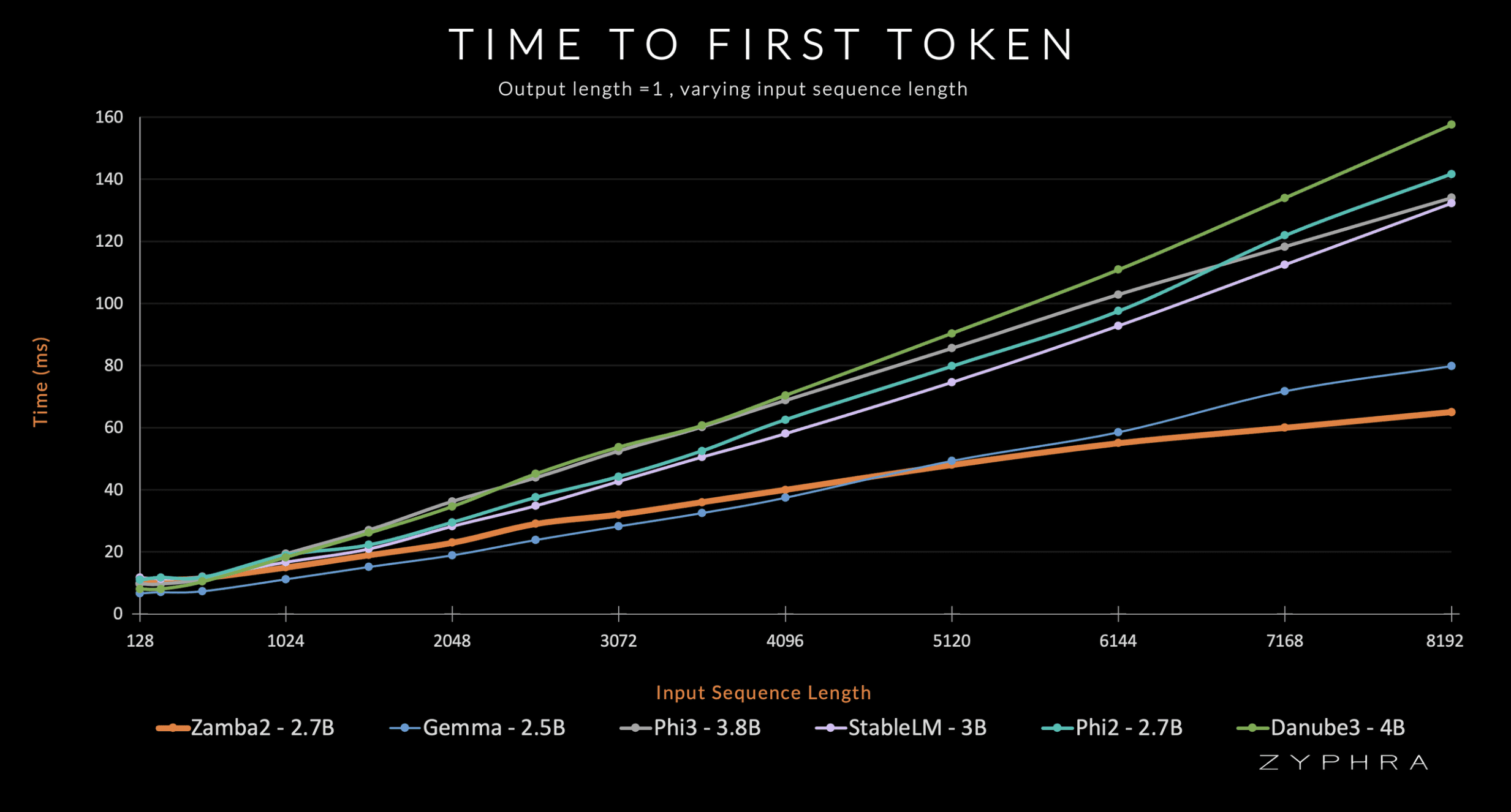

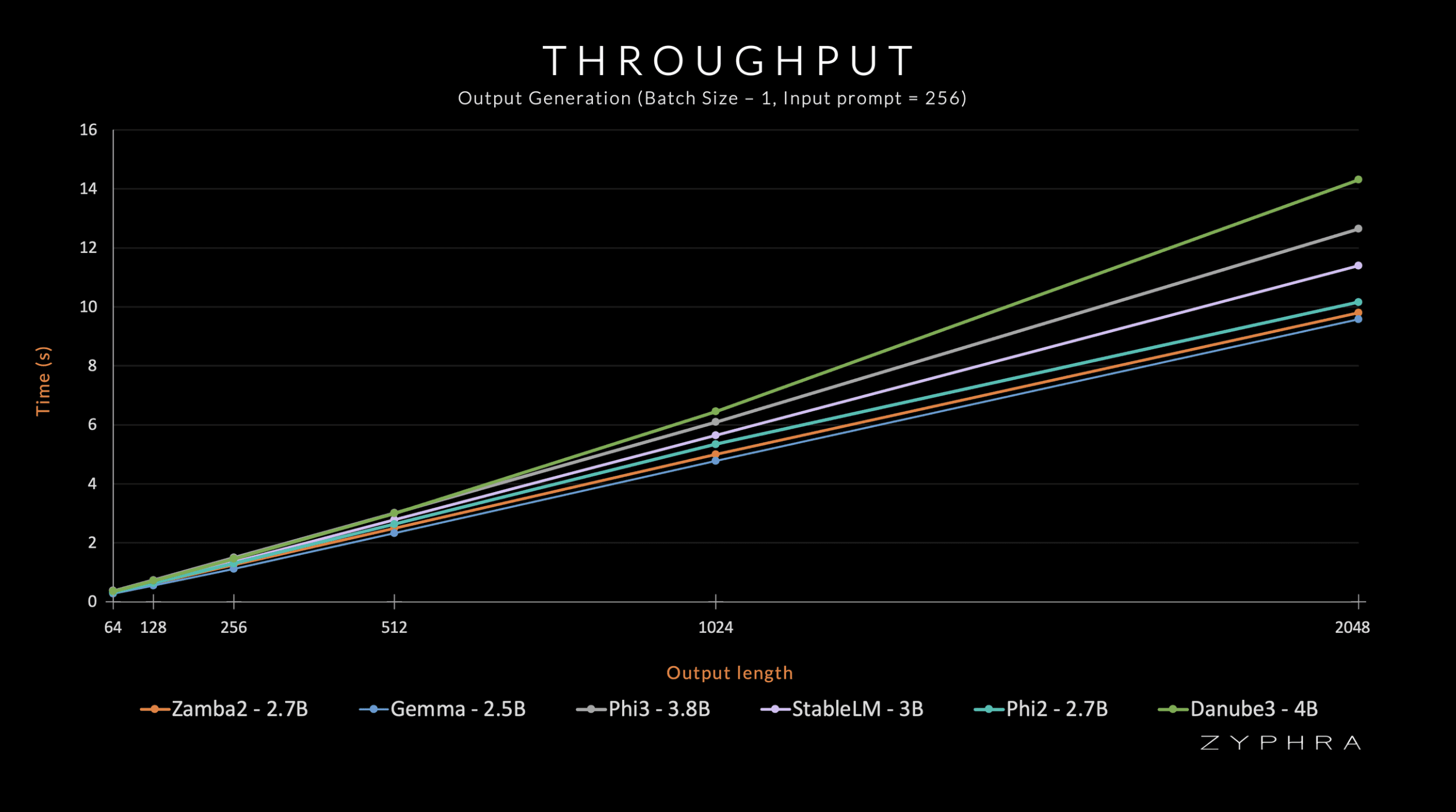

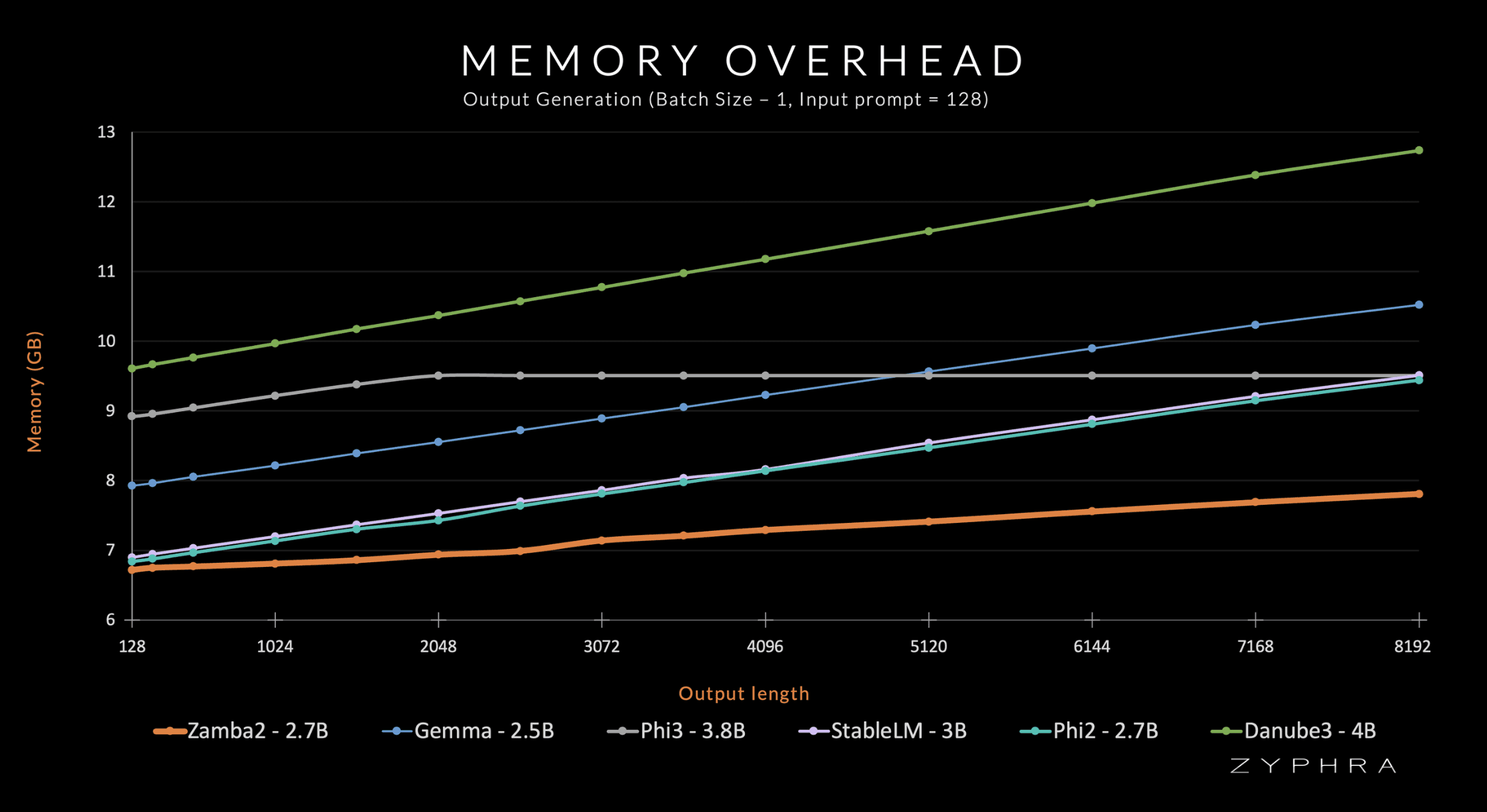

Zamba2-small is extremely inference-efficient, achieving 2x faster time-to-first-token, a 27% reduction in memory overhead, and a 1.29x lower generation latency compared to Phi3-3.8B.

We release the model weights open-source (Apache 2.0)

Zamba2-small (2.7B) Efficiency

Zamba2-small maintains the quality of a 3-4B dense transformer while only requiring the inference compute and memory of a 1-2B dense transformer.

Much of our focus on designing hybrid models is to maintain the best of both worlds (the efficiency of SSM/RNN architectures, and the quality of the transformer architecture). Some of the main contributing factors to Zamba2's benefits over comparable dense transformers are:

Model Quality:

The shared transformer block allows more parameters to be allocated to the Mamba2 backbone. In turn, the shared transformer block preserves the rich cross-sequence dependencies of the attention computation.

Our 3 trillion token pre-training dataset, which is composed of a combination of Zyda and openly-available datasets that are thoroughly filtered and deduplicated.

We have a separate "annealing" pre-training phase, which aggressively decays the learning rate over 100B high-quality tokens.

Inference Efficiency:

Mamba2 blocks are extremely efficient, and have roughly 4 times the throughput of an equal-parameter transformer block.

Mamba blocks only have small hidden states to store and don't require a KV-cache, so we only need to store KV states for the invocations of the shared attention block.

We choose model sizings that are very amenable to parallelization on modern hardware (i.e. multiple streaming multiprocessors on GPUs, multiple cores on CPUs).

Due to these results, we believe Zamba2-2.7B offers a significant improvement over comparable small language models and is especially suited to on-device environments where memory capacity is constrained and inference speed is paramount.

Zamba2- small (2.7B) Evaluations

Zamba2- small (2.7B) Inference Performance

Zamba2- small (2.7B) Architecture

Zamba2-2.7B utilizes and extends our original Zamba hybrid SSM-attention architecture. The core Zamba architecture consists of a backbone of Mamba layers interleaved with one or more shared attention layers (one shared attention in Zamba1, two in Zamba2). This attention has shared weights to minimize the parameter cost of the model. We find that concatenating the original model embeddings to the input to this attention block improves performance, likely due to better maintenance of information across depth. The Zamba2 architecture also applies LoRA projection matrices to the shared MLP to gain some additional expressivity in each block and allow each shared block to specialize slightly to its own unique position while keeping the additional parameter overhead small.

Zamba2-2.7B was pretrained for approximately 3T tokens on a dataset composed of Zyda and open-access pre-training datasets (all aggressively filtered and deduplicated to ensure quality), then annealed on 100B of the highest-quality tokens.

Zamba2-2.7B will be released under an open source license, allowing researchers, developers, and companies to leverage its capabilities. We invite the broader AI community to explore Zamba's unique architecture and continue pushing the boundaries of efficient foundation models. A Huggingface integration is available, and a pure-pytorch implementation is available.

Zyphra's team is committed to democratizing advanced AI systems, exploring novel architectures on the frontier of performance, and advancing the scientific study and understanding of powerful models. We look forward to collaborating with others who share our vision.